Important (Advice)

I utilize elevated diction to ensure precision. Use of Google Search is strictly advised to thoroughly understand the following reading.

Abstract

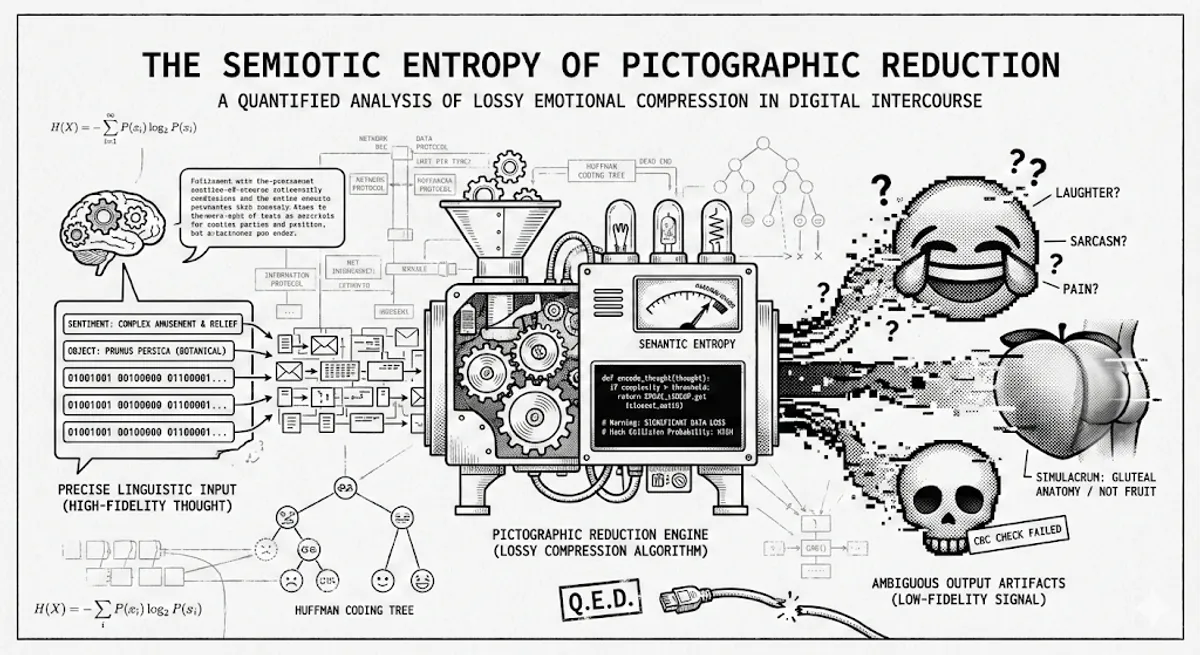

It is a generally accepted axiom among the intellectually unencumbered that a picture is worth a thousand words. This is, demonstrably, a falsehood. In the context of modern digital communication, a picture—specifically the Unicode Standard pictograph—is often worth approximately zero intelligible concepts. This treatise examines the widespread proliferation of emojis not as a playful evolution of language, but as a catastrophic implementation of Lossy Compression. We shall apply Claude Shannon’s Information Theory and Huffman Coding principles to demonstrate that while emojis optimize for transmission bandwidth (low bit cost), they maximize Semantic Entropy (high interpretation error). By replacing precise linguistic signifiers with ambiguous graphical artifacts, humanity is subjecting its collective consciousness to a “digital bit-rot” of the highest order.

I. The Axiom of Linguistic Decay

Language, in its optimal state, is a protocol. Much like TCP (Transmission Control Protocol), it relies on a handshake of understanding between Sender and Receiver . I encode a thought into syntax; you decode syntax into thought. If the protocol is robust, .

However, the modern “screenager” (a pejorative I use with clinical precision) has abandoned this robust protocol in favor of a heuristic shortcut: the Emoji. This is metabolically convenient, certainly. Writing “I am experiencing a complex mixture of relief and amusement regarding your recent failure” requires significant caloric expenditure and keystrokes. Tapping a yellow face shedding tears (😂) (U+1F602) requires one.

But we must ask: Is this optimization? Or is it merely data corruption disguised as efficiency?

To understand this, we must view human conversation through the lens of Data Compression. There are two types:

- Lossless Compression: The data is reduced in size but reconstructs perfectly (e.g., ZIP, PNG).

- Lossy Compression: The data is reduced significantly, but information is permanently discarded to save space (e.g., JPEG, MP3).

I posit that the transition from English to Emoji is a form of aggressive Lossy Compression, resulting in a conversational artifacting so severe that the original message is often unrecoverable.

II. The Bit-Wise Efficiency of the ‘Tears of Joy’ (😂)

Let us conduct a code-level autopsy of the efficiency gains. We shall compare the standard English expression of amusement against its Unicode equivalent.

Consider the phrase: “That is incredibly humorous.”

In a standard Python environment utilizing UTF-8 encoding, we can calculate the exact memory footprint of this sentiment.

import sys

# The verbose, unambiguous sentimentsentiment_lossless = "That is incredibly humorous."

# The ambiguous, slothful equivalent (Tears of Joy)sentiment_lossy = "😂"

# Calculate size in bytessize_lossless = sys.getsizeof(sentiment_lossless.encode('utf-8'))size_lossy = sys.getsizeof(sentiment_lossy.encode('utf-8'))

print(f"Lossless Size: {size_lossless} bytes")# Output: 61 bytes (approx, depending on overhead)

print(f"Lossy Size: {size_lossy} bytes")# Output: 37 bytes (overhead included) or 4 bytes raw payloadMathematically, the Compression Ratio () is defined as:

In the raw payload comparison (ignoring Python object overhead), the string is 28 bytes. The emoji is 4 bytes.

A compression ratio is impressive for a file system. However, in Information Theory, compression is only viable if the decoding is accurate. If I zip a file and when you unzip it, 15% of the data is random noise, the algorithm is a failure.

When you send ”😂”, you are relying on a lookup table in the receiver’s brain. But unlike the ASCII table, which is standardized, the “Emoji Lookup Table” in the human cortex is mutable, inconsistent, and plagued by sociological noise.

III. Shannon Entropy and the Semantic Ambiguity Floor

Claude Shannon, the father of Information Theory, gave us the formula for Entropy ()—a measure of uncertainty or “surprise” in a message.

In a rigorous English sentence, the Conditional Entropy (the uncertainty of the meaning of a word given the previous words) is relatively low. “The cat sat on the…” implies “mat” or “couch” with high probability.

Now, consider the Emoji. It lacks syntax. It lacks grammar. It is a floating packet of data without a header.

When user sends ”😂”, the probability distribution of the intended meaning splits into a chaotic array:

- : “I am laughing literally.”

- : “I am laughing politely to end this conversation.”

- : “I am mocking you.”

- : “I am in extreme pain, masked by humor.”

Because is distributed across multiple mutually exclusive meanings, the Entropy is maximized.

In Computer Science, we call this a Hash Collision. You have mapped multiple distinct inputs (Real Laughter, Sarcasm, Pain) to a single output hash (😂). Since you cannot reverse a hash function, the receiver cannot know the original input.

Therefore, using emojis is not communication; it is cryptographically secure obfuscation of your true feelings.

IV. The Huffman Coding of Social Laziness

Why, then, do humans persist in this inefficiency? The answer lies in Huffman Coding.

In Huffman coding, the most frequently used characters are assigned the shortest binary codes. In the “Human Social Operating System,” the most frequent emotional states are assigned the shortest physical actions to minimize metabolic cost.

- Complex Theory: “I validate your statement.” High effort.

- Huffman Optimization: ”👍” Low effort.

The human brain is running a greedy algorithm. It chooses the path of local optimization (least typing now) regardless of the global cost (confusion later).

This leads to what I call the “Peach Paradox”, which brings us to the realm of Semiotics.

V. Baudrillard and the Peach: A Study in Simulacra

Ferdinand de Saussure distinguished between the Signifier (the word/image) and the Signified (the concept).

In 2010, the Unicode Consortium released U+1F351 (🍑).

- Original Signified: A distinct species of rose family fruit, Prunus persica.

- Current Signified: Portions of the gluteal anatomy.

This is a semantic drift so violent it would make a prescriptivist linguist weep. It is an example of Jean Baudrillard’s Simulacrum: The image has not only replaced the reality; it has murdered the reality.

If one attempts to discuss botany using emojis, one will inevitably be accused of harassment. This is a “namespace pollution” event. The variable peach has been overwritten by a global variable in the pop-culture library.

This highlights the fatal flaw of pictographic languages. They lack Versioning. English evolves, but we have dictionaries (documentation). Emojis evolve via meme-theory (undocumented patches). You are running code (conversation) on a production server (society) using dependencies (emojis) that have no changelog.

VI. The Resolution: A Return to ASCII

How do we fix this? We treat conversation like a mission-critical codebase.

- Deprecate Ambiguous Libraries: Stop using U+1F602 (Tears of Joy). It is the

GOTOstatement of emotions—considered harmful. - Implement CRC (Cyclic Redundancy Checks): If you must use an emoji, you must verify the packet.

- Bad: “That’s crazy 💀”

- Good: “That is crazy. (Checksum: By the skull icon, I imply metaphorical death via laughter, not a literal threat to your life).”

- Adopt Verbosity: Do not fear bandwidth. We have 5G. We have fiber optics. You can afford the extra bytes to type “I find that amusing.”

VII. Conclusion

The “Tears of Joy” emoji is not a symbol of happiness. It is a symbol of a heuristic stall. It represents the moment the human brain encountered a complex emotion, failed to parse it into language, and threw a NullReferenceException in the form of a yellow cartoon face.

We are not cavemen painting on walls. We are the architects of the information age. Stop compressing your soul into 4 bytes.

Q.E.D.